Engineers get under robot's skin to heighten senses

By Tom Fleischman

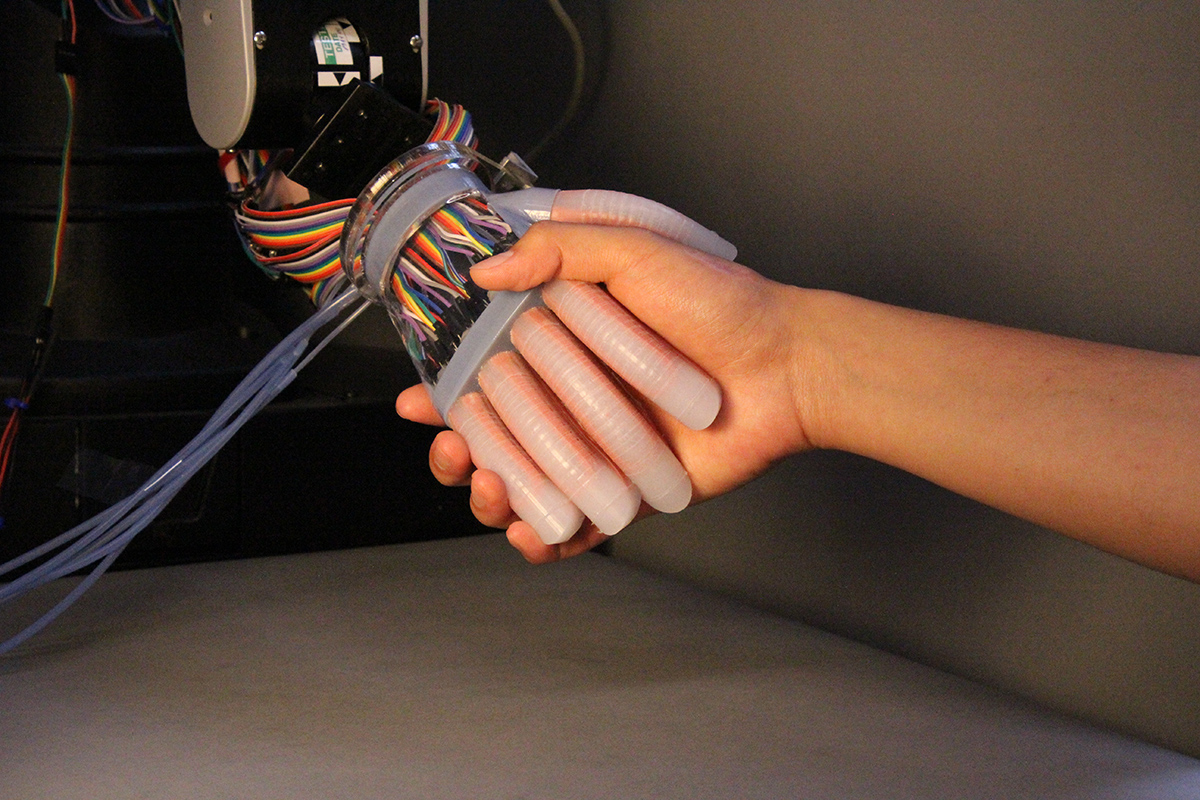

Most robots achieve grasping and tactile sensing through motorized means, which can be excessively bulky and rigid. A Cornell group has devised a way for a soft robot to feel its surroundings internally, in much the same way humans do.

A group led by Robert Shepherd, assistant professor of mechanical and aerospace engineering and principal investigator of Organic Robotics Lab, has published a paper describing how stretchable optical waveguides act as curvature, elongation and force sensors in a soft robotic hand.

Doctoral student Huichan Zhao is lead author of “Optoelectronically Innervated Soft Prosthetic Hand via Stretchable Optical Waveguides,” which is featured in the debut edition of Science Robotics. The paper published Dec. 6; also contributing were doctoral students Kevin O’Brien and Shuo Li, both of Shepherd’s lab.

“Most robots today have sensors on the outside of the body that detect things from the surface,” Zhao said. “Our sensors are integrated within the body, so they can actually detect forces being transmitted through the thickness of the robot, a lot like we and all organisms do when we feel pain, for example.”

Optical waveguides have been in use since the early 1970s for numerous sensing functions, including tactile, position and acoustic. Fabrication was originally a complicated process, but the advent over the last 20 years of soft lithography and 3-D printing has led to development of elastomeric sensors that are easily produced and incorporated into a soft robotic application.

Shepherd’s group employed a four-step soft lithography process to produce the core (through which light propagates), and the cladding (outer surface of the waveguide), which also houses the LED (light-emitting diode) and the photodiode.

The more the prosthetic hand deforms, the more light is lost through the core. That variable loss of light, as detected by the photodiode, is what allows the prosthesis to “sense” its surroundings.

“If no light was lost when we bend the prosthesis, we wouldn’t get any information about the state of the sensor,” Shepherd said. “The amount of loss is dependent on how it’s bent.”

The group used its optoelectronic prosthesis to perform a variety of tasks, including grasping and probing for both shape and texture. Most notably, the hand was able to scan three tomatoes and determine, by softness, which was the ripest.

Zhao said this technology has many potential uses beyond prostheses, including bio-inspired robots, which Shepherd has explored along with Mason Peck, associate professor of mechanical and aerospace engineering, for use in space exploration.

“That project has no sensory feedback,” Shepherd said, referring to the collaboration with Peck, “but if we did have sensors, we could monitor in real time the shape change during combustion [through water electrolysis] and develop better actuation sequences to make it move faster.”

Future work on optical waveguides in soft robotics will focus on increased sensory capabilities, in part by 3-D printing more complex sensor shapes, and by incorporating machine learning as a way of decoupling signals from an increased number of sensors. “Right now,” Shepherd said, “it’s hard to localize where a touch is coming from.”

This work was supported by a grant from Air Force Office of Scientific Research, and made use of the Cornell NanoScale Science and Technology Facility and the Cornell Center for Materials Research, both of which are supported by the National Science Foundation.

Media Contact

Get Cornell news delivered right to your inbox.

Subscribe