Cornell part of $25M NSF effort to untangle future physics data

By Tom Fleischman

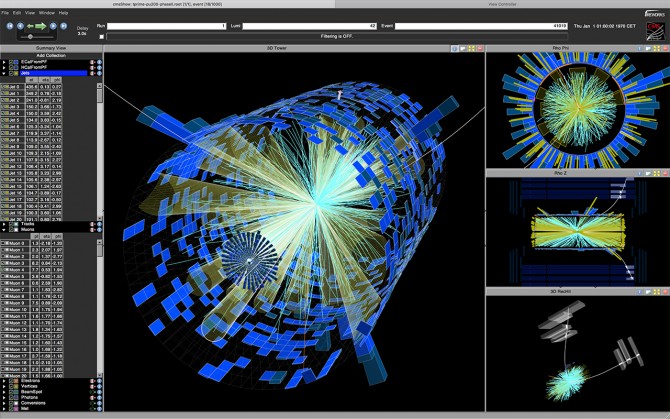

Particle accelerators such as the Large Hadron Collider (LHC) at the European Organization for Nuclear Research (CERN) produce massive amounts of data that help answer long-held questions regarding Earth and the far reaches of the universe. The Higgs boson, which had been the missing link in the Standard Model of Particle Physics, was discovered there in 2012 and earned researchers the 2013 Nobel Prize in physics.

And while the LHC – a 17-mile synchrotron ring straddling the Swiss-French border – already produces mountains of data to be analyzed by the world’s particle physicists, an ongoing upgrade will result in a tenfold increase in luminosity (the number of particles in a given space at a given time) and even more data to store. That will require a similar increase in processing and storage capacity.

To address that challenge, the National Science Foundation (NSF) on Sept. 4 launched the Institute for Research and Innovation in Software for High-Energy Physics (IRIS-HEP), a five-year, $25 million effort to tackle the unprecedented torrent of data that will come from the high-luminosity LHC.

Researchers at Cornell will play a major role in developing computation software strong enough to handle the tsunami of data expected from an upgraded CERN. Of the $25 million from the NSF, $1 million will come to Cornell; Peter Wittich, physics professor and director of the Laboratory of Elementary Particle Physics, is principal investigator for the Cornell portion of the project.

Wittich’s team on this project includes: Dan Riley, researcher at the Cornell Laboratory for Accelerator-based Sciences and Education; Steve Lantz, senior research associate at Cornell’s Center for Advanced Computing; and Kevin McDermott, doctoral student in the Cornell CMS research group.

Peter Elmer, computational physicist at Princeton University and CERN, is the NSF’s lead investigator for IRIS-HEP. Elmer said the institute will be an “intellectual hub” for software research and development aimed at getting the most from the LHC upgrade, slated for completion in 2026.

“We are now searching for the next layer of physics beyond the Standard Model,” Elmer said. “[IRIS-HEP] will … allow us to explore high-luminosity LHC data and make discoveries.”

Wittich, also a CERN scientist, said he and his team will be focusing on improving the performance of a particular part of the data analysis software toolbox in anticipation of the high-luminosity era of the LHC.

“Specifically, the piece of software is the part that takes the individual energy deposits in the detector and plays ‘connect the dots’ to reconstruct the trajectories of the charged particles,” Wittich said. “With this upgrade at LHC, there will be a large increase in the number of proton-proton collisions per [event] – from around 40 to around 200.

“That means many more particles flying around,” he said, “and more confusion in trying to get the trajectories understood.”

This project, Wittich said, is also a great training ground for students to learn about how to squeeze the most “bang for your buck” out of a piece of software. “Working with Steve [Lantz] and Dan [Riley] and our other collaborators,” Wittich said, “our students will be exposed to state-of-the-art computing techniques that will serve them well as they pursue their own endeavors.”

IRIS-HEP, a coalition of 17 research universities across the U.S., will receive $5 million per year for five years to develop innovative software and train the next generation of its users.

“It’s a crucial moment in physics,” said Bogdan Mihaila, the NSF program officer overseeing the IRIS-HEP award. “We know the Standard Model is incomplete. At the same time, there is a software grand challenge to analyze large sets of data, so we can throw away results we know and keep only what has the potential to provide new answers and new physics.”

Media Contact

Get Cornell news delivered right to your inbox.

Subscribe