Car safety system could anticipate driver's mistakes

By Bill Steele

It may be a while yet before we have cars that drive themselves, but in the near future your car may help you drive. In particular, it could warn you when you’re about to do something stupid.

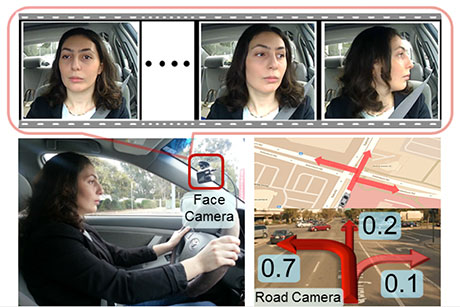

Cornell researchers have developed one crucial tool the car will need: a system that anticipates what the driver is going to do a few seconds before it happens. Some cars already are equipped with safety systems that monitor a car’s movement and warn if there is an unsafe turn or lane change. But that warning comes too late, after the driver has acted. By observing the driver’s body language and considering that in the context of what’s happening outside the car, a new computer algorithm determines the probability that the driver will turn, change lanes or continue straight ahead.

“There are many systems now that monitor what’s going on outside the car,” explained Ashutosh Saxena, assistant professor of computer science. “Internal monitoring of the driver will be the next leap forward.” Saxena and graduate student Ashesh Jain will describe their system in a workshop on “Model Learning for Human-Robot Communication” at the 2015 Robotic Science and Systems conference July 16 in Rome.

Combining driver anticipation with radar or cameras to locate other vehicles, the car’s safety system could warn the driver when the anticipated action could be dangerous. The warning might be a light, a sound or even a vibration. “If there’s danger on the left, the left side of the steering wheel or the seat could vibrate,” Jain suggested.

Drawing on street maps and GPS information, the system also might give an “illegal turn” message if the driver was planning to turn the wrong way on a one-way street.

To develop the system, Saxena and colleagues recorded video of 10 drivers, along with video of the road ahead, for 1,180 miles of freeway and city driving over a period of two months. A computer using face detection and tracking software identified head movements and learned to associate them with turns and lane changes, so that the final system can anticipate possible actions the driver may take. The computer continuously reports its anticipations to the car’s central safety system.

In a test against another data set of videos with different drivers, the system correctly predicted the driver’s actions 77.4 percent of the time, anticipating an average 3.53 seconds in advance. Those few extra seconds might save lives, Saxena said.

The system still needs refinement, the researchers noted. Six percent of the time, they found, face tracking was confused by shadows of passing trees and other lighting variations. The system also can be misled by drivers interacting with passengers. In some situations, such as turning from a turn-only lane, drivers don’t always give the same head cues. Sometimes they rely on short-term memory of traffic conditions and don’t turn their heads to check. It may come down to tracking eye movements, the researchers said.

This is only a first step, Jain said, and incorporating it in a complete safety system is a job for automakers. Future improvements may include infrared cameras to observe at night and 3-D cameras for greater accuracy. Other inputs may be added, such as tactile sensors to monitor pressure on the steering wheel, and cameras or pressure sensors to observe what the driver’s feet are doing – perhaps to anticipate braking.

Observations could be extended to other activities, such as whether the driver is looking at a phone or a watch. The system could link directly to such wearable technologies, Jain said.

Also contributing to the research are graduate student Hema S. Koppula and Stanford University graduate students Bharad Raghavan and Shane Soh. Saxena’s work is supported by the U.S. Army Research Office, the Office of Naval Research and the National Science Foundation.

Media Contact

Get Cornell news delivered right to your inbox.

Subscribe